Google announced a further expansion of its long-standing partnership with Intel, committing to using multiple generations of Intel CPUs in its artificial intelligence (AI) data centers. Under this collaboration, Intel’s latest Xeon 6 processors will be used in AI model training and inference workloads, giving Intel a stronger position in the AI chip market long dominated by Nvidia.

Following the announcement, Intel’s stock rose 2%, while Alphabet’s stock fell by more than 1%. In a statement, Amin Vahdat, Google’s Chief Technology Officer for AI Infrastructure, said that Intel’s Xeon product roadmap gives them confidence that it can continue to meet the growing performance and efficiency demands of future workloads. Specific financial terms and contract timelines have not yet been disclosed.

As the AI race enters its next phase, the role of the CPU in system architecture is once again in the spotlight. Dion Harris, head of AI infrastructure at Nvidia, recently pointed out that as agency work increasingly pushes computing demands beyond GPUs, CPUs are becoming a bottleneck for AI computing. Intel CEO Li-Wu Chen echoed this view in a statement, emphasizing that scaling AI requires not only accelerators but also a balanced system architecture.

In fact, Intel’s stock price has nearly tripled in the past year due to substantial investment. In August 2025, Intel sold 10% of its shares to the US government, with the Trump administration using this to emphasize the company’s ability to manufacture advanced chips in the United States. Subsequently, in September, Nvidia announced a $5 billion investment in Intel.

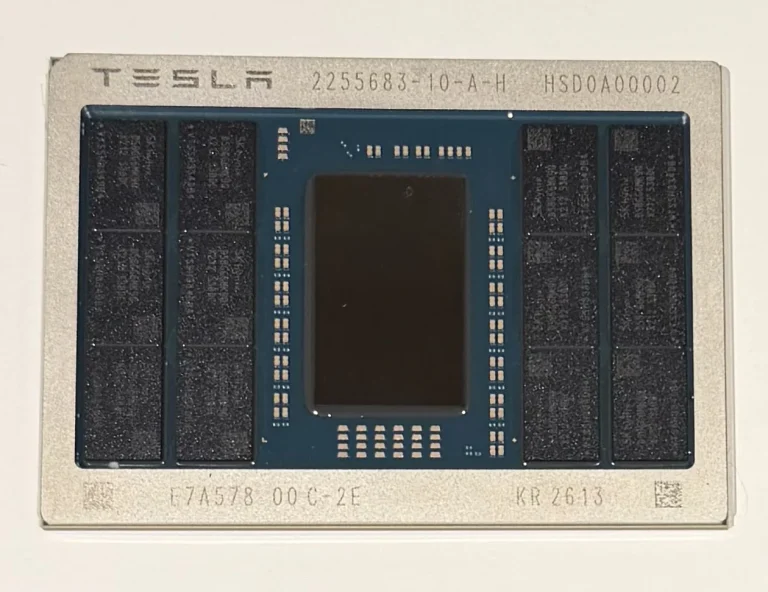

Intel is currently producing its latest Xeon processors using the state-of-the-art Intel 18A process technology at its new wafer fab in Arizona, scheduled to open in 2025. In addition, Google CEO Chen Liwu revealed earlier this week that Tesla CEO Elon Musk has commissioned Intel to design, manufacture, and package custom chips for SpaceX, xAI, and Tesla’s Terafab project in Texas.

Besides CPU procurement, Google and Intel also reaffirmed their collaboration on Infrastructure Processors (IPUs) since 2022. Intel points out that this programmable accelerator can help traditional data centers take over additional tasks such as network traffic routing, storage management, data encryption, and virtualization software execution, thereby relieving the main CPU’s computational burden.

However, despite deepening its reliance on Intel, Google continues to develop its own computing chips. For over a decade, Google has been dedicated to developing its proprietary TPUs, and in 2024, it adopted the Arm architecture, launching its first custom CPU, “Axion.”